Emotion recognition is the process of identifying human emotion, most typically from facial expressions as well as from verbal expressions. This is both something that humans do automatically but computational methodologies have also been developed.

Scientific Definition Emotion

An emotion has to be differentiated from the concept of feeling, mood and personality. A feeling is, for example, when you feel a masked person behind a wall. Then you feel fear. A feeling only becomes an emotion when this physical change is evaluated cognitively.

If someone z. If, for example, his heartbeat is traced back to the masked man, one would speak of fear. However, if he returns to his secretly loved one, one would speak of joy. Emotions usually last only a few seconds and have a clearly defined on-set and off-set. Moods, on the other hand, can last for hours, days or even weeks. If someone says he’s in a bad mood today, he’s in a bad mood. However, this does not necessarily have anything to do with emotions.

Often a particular mood can increase or decrease the probability of occurrence of a particular emotion, but these two things must be analytically separated. Finally, the personality of a person needs to be differentiated from the mood. A choleric person, for example, is permanently negatively over-excited. In this way one can imagine the terms feeling, emotion, mood and personality arranged on a timeline – with feeling on the one hand, short term, and personality on the other, long term side.

Human

Humans show universal consistency in recognising emotions but also show a great deal of variability between individuals in their abilities. This has been a major topic of study in psychology.

Cross Race Effect

Emotional recognition between two people is subject to strong fluctuations. In psychology, a phenomenon has been discovered, which is called cross-race effect. This phenomenon implies that the emotion recognition rate is lower when the emotion to be recognized belongs to a face that does not belong to the same culture or ethnicity as that of the observer. However, this effect can be overcome by a form of training.

Visual Mimic Recognition

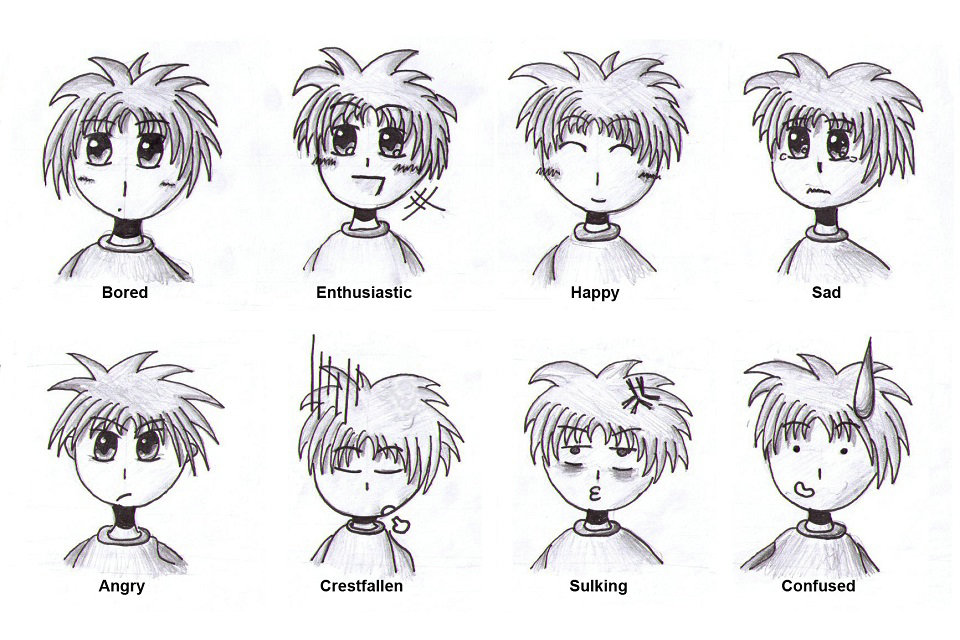

This part is commonly referred to as facial expressions. As a human-machine interface, a digital video camera or equivalent optical input device is used. Here, the methods of facial recognition are used to analyze the characteristics of the facial surface. By automatic classificationit is possible to associate the facial expressions of the serial frames with a cluster which could possibly be associated with an emotion. Research has shown, however, that only 30% of the mimic emotions correspond to the really felt emotions. Therefore one should not equate visual facial expressions with visual emotion recognition. The biological background of visual emotion recognition is the simulation of a human optic nerve in a robot.

Emotion induction

For experimental settings in the fields of emotion psychology, behavioral ethology, neuropsychology and many other sciences, it is often important to “generate” specific emotions under laboratory conditions. Emotional induction is one of the most difficult areas of emotional research. Several meta-analyzes on this topic have extracted several methods that can most effectively induce emotions.

First and foremost is the capture of emotion in reality (keyword field research). Due to low internal validity, this is often refrained from. The second method, which combines a high internal with a high external validity, is the method of emotional recalls in which one tries to evoke memories from the emotion memory. Discouraged for experiments outside of EEG emotion research is by induction methods such as the IAPS or induction method, which allegedly use emotion-inducing film sequences or music pieces. All of these methods remain without proof of specific effectiveness. Robotics often uses idealized experimental procedures, eg. B.:

An induction method is supposed to induce an emotion in humans.

Man expresses his emotion with a changed face surface.

A webcam on the computer captures the new facial expression.

The computer can automatically classify the emotion by classifying it as the emotion that was previously induced.

After completing the learning phase, the AI should be able to independently recognize emotions without having been previously taught by a human being. However, as neither the induction method is often tested for efficacy, nor are the induced emotions evaluated during the experiment itself, these idealized experimental procedures in robotics often remain erroneous and incomplete.

Automatic

This process leverages techniques from multiple areas, such as signal processing, machine learning, and computer vision. Different methodologies and techniques may be employed to interpret emotion such as Bayesian networks., Gaussian Mixture models and Hidden Markov Models.

Approaches

The task of emotion recognition often involves the analysis of human expressions in multimodal forms such as texts, audio, or video. Different emotion types are detected through the integration of information from facial expressions, body movement and gestures, and speech. The existing approaches in emotion recognition to classify certain emotion types can be generally classified into three main categories: knowledge-based techniques, statistical methods, and hybrid approaches.

Knowledge-based Techniques

Knowledge-based techniques (sometimes referred to as lexicon-based techniques), utilize domain knowledge and the semantic and syntactic characteristics of language in order to detect certain emotion types. In this approach, it is common to use knowledge-based resources during the emotion classification process such as WordNet, SenticNet, ConceptNet, and EmotiNet, to name a few. One of the advantages of this approach is the accessibility and economy brought about by the large availability of such knowledge-based resources. A limitation of this technique on the other hand, is its inability to handle concept nuances and complex linguistic rules.

Knowledge-based techniques can be mainly classified into two categories: dictionary-based and corpus-based approaches. Dictionary-based approaches find opinion or emotion seed words in a dictionary and search for their synonyms and antonyms to expand the initial list of opinions or emotions. Corpus-based approaches on the other hand, start with a seed list of opinion or emotion words, and expand the database by finding other words with context-specific characteristics in a large corpus. While corpus-based approaches take into account context, their performance still vary in different domains since a word in one domain can have a different orientation in another domain.

Statistical Methods

Statistical methods commonly involve the use of different supervised machine learning algorithms in which a large set of annotated data is fed into the algorithms for the system to learn and predict the appropriate emotion types. This approach normally involves two sets of data: the training set and the testing set, where the former is used to learn the attributes of the data, while the latter is used to validate the performance of the machine learning algorithm. Machine learning algorithms generally provide more reasonable classification accuracy compared to other approaches, but one of the challenges in achieving good results in the classification process, is the need to have a sufficiently large training set.

Some of the most commonly used machine learning algorithms include Support Vector Machines (SVM), Naive Bayes, and Maximum Entropy. Deep learning, which is under the unsupervised family of machine learning, is also widely employed in emotion recognition. Well-known deep learning algorithms include different architectures of Artificial Neural Network (ANN) such as Convolutional Neural Network (CNN), Long Short-term Memory (LSTM), and Extreme Learning Machine (ELM). The popularity of deep learning approaches in the domain of emotion recognition maybe mainly attributed to its success in related applications such as in computer vision, speech recognition, and Natural Language Processing (NLP).

Hybrid Approaches

Hybrid approaches in emotion recognition are essentially a combination of knowledge-based techniques and statistical methods, which exploit complementary characteristics from both techniques. Some of the works that have applied an ensemble of knowledge-driven linguistic elements and statistical methods include sentic computing and iFeel, both of which have adopted the concept-level knowledge-based resource SenticNet. The role of such knowledge-based resources in the implementation of hybrid approaches is highly important in the emotion classification process. Since hybrid techniques gain from the benefits offered by both knowledge-based and statistical approaches, they tend to have better classification performance as opposed to employing knowledge-based or statistical methods independently. A downside of using hybrid techniques however, is the computational complexity during the classification process.

Datasets

Data is an integral part of the existing approaches in emotion recognition and in most cases it is a challenge to obtain annotated data that is necessary to train machine learning algorithms. While most publicly available data are not annotated, there are existing annotated datasets available to perform emotion recognition research. For the task of classifying different emotion types from multimodal sources in the form of texts, audio, videos or physiological signals, the following datasets are available:

HUMAINE: provides natural clips with emotion words and context labels in multiple modalities

Belfast database: provides clips with a wide range of emotions from TV programs and interview recordings

SEMAINE: provides audiovisual recordings between a person and a virtual agent and contains emotion annotations such as angry, happy, fear, disgust, sadness, contempt, and amusement

IEMOCAP: provides recordings of dyadic sessions between actors and contains emotion annotations such as happiness, anger, sadness, frustration, and neutral state

eNTERFACE: provides audiovisual recordings of subjects from seven nationalities and contains emotion annotations such as happiness, anger, sadness, surprise, disgust, and fear

DEAP: provides electroencephalography (EEG), electrocardiography (ECG), and face video recordings, as well as emotion annotations in terms of valence, arousal, and dominance of people watching film clips

DREAMER: provides electroencephalography (EEG) and electrocardiography (ECG) recordings, as well as emotion annotations in terms of valence, arousal, and dominance of people watching film clips

Applications

The computer programmers often use Paul Ekman’s Facial Action Coding System as a guide.

Emotion recognition is used for a variety of reasons. Affectiva uses it to help advertisers and content creators to sell their products more effectively. Affectiva also makes a Q-sensor that gauges the emotions of autistic children. Emotient was a startup company which utilized artificial intelligence to predict “attitudes and actions based on facial expressions”. Apple indicated its intention to buy Emotient in January 2016. nViso provides real-time emotion recognition for web and mobile applications through a real-time API. Visage Technologies AB offers emotion estimation as a part of their Visage SDK for marketing and scientific research and similar purposes. Eyeris is an emotion recognition company that works with embedded system manufacturers including car makers and social robotic companies on integrating its face analytics and emotion recognition software; as well as with video content creators to help them measure the perceived effectiveness of their short and long form video creative. Emotion recognition and emotion analysis are being studied by companies and universities around the world.

Lying detection

Multi-sensory emotion perception is helpful in assessing the truthfulness of utterances, more specifically in detecting lies, where lies are to be understood as deliberately false deceptive statements. While it is not a universally valid indicator for the certainty of lies, mimicry, gestures, language and posture can provide clues. Relatively reliable are unconscious or non-controllable signals, such as pupil width, line of vision or blushing. Furthermore, attention should increasingly be focused on discrepancies between the various verbal and non-verbal expressions of a person.

Source from Wikipedia