The Academy Color Encoding System (ACES) is a color image encoding system created by hundreds of industry professionals under the auspices of the Academy of Motion Picture Arts and Sciences. ACES allows for a fully encompassing color accurate workflow, with “seamless interchange of high quality motion picture images regardless of source”.

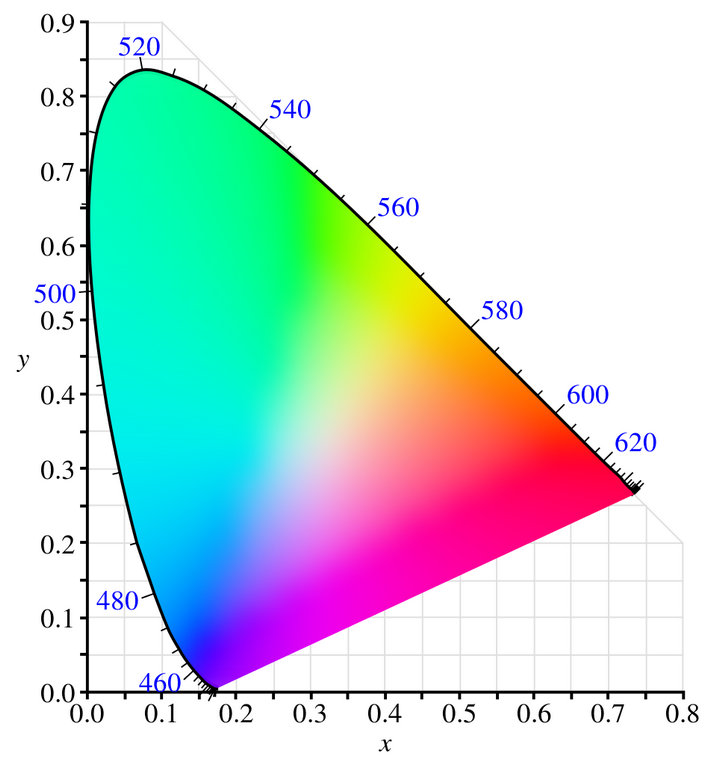

The system defines its own color primaries that completely encompass the visible spectral locus as defined by the CIE xyY specification. The white point is approximate to the CIE D60 standard illuminant, and ACES compliant files are encoded in 16-bit half-floats, thus allowing ACES OpenEXR files to encode 30 stops of scene information. ACES supports both high dynamic range (HDR) and wide color gamut (WCG).

The version 1.0 release occurred in December 2014, and has been implemented by multiple vendors, and used on multiple motion pictures and television shows. ACES received a Television Academy Emmy Engineering Award in 2012. The system is standardized in part by the Society of Motion Picture and Television Engineers (SMPTE) standards body. Changes in the ACES specifications, announcements, news, discussion and other information is regularly updated at http://www.ACESCentral.com.

Hundreds of productions, from Motion Pictures to Television to Commercials, and VR content has been produced using ACES including: The Lego Batman Movie (2017) Guardians of the Galaxy Vol. 2 (2017) King Arthur: Legend of the Sword (2017) The Grand Tour (2016 TV Series) Cafe Society (2016) Bad Santa 2 (2016) The Legend of Tarzan (2016) Chef’s Table (2016 TV Series) Chappie (2015) The Wedding Ringer (2015) Baahubali: The Beginning (2015) The Wave (2015).

Background

The ACES project began its development in 2004 in collaboration with 50 industry technologists. The project began due to the recent incursion of digital technologies into the motion picture industry. The traditional motion picture workflow had been based on film negatives, and with the digital transition, scanning of negatives and digital camera acquisition. The industry lacked a color management scheme for diverse sources coming from a variety of digital motion picture cameras and film. The ACES system is designed to control the complexity inherent in managing a multitude of file formats, image encoding, metadata transfer, color reproduction, and image interchanges that are present in the current motion picture workflow.

System overview

The system composes of several components which are designed to work together to create a uniform workflow:

Academy Color Encoding Specification (ACES): The specification that defines the ACES colorspace, allowing half-float high-precision encoding in scene linear light as exposed in a camera, and archival storage in files.

Input Device Transform (IDT): This name was deprecated in version 1.0 and replaced by Input Transform. The process that takes captured images from any ingestible source material and transforms the content into the ACES color space and encoding specifications. There are many IDT’s, which are specific to each class of capture device and likely specified by the manufacturer using ACES guidelines. It is recommended that a different IDT be used for tungsten versus daylight lighting conditions.

Input Transform: The current terminology name for an Input Device Transform (IDT), as per ACES version 1.0 and above.

Look Modification Transform (LMT): A specific change in look that is applied systematically in combination with the RRT and ODT’s. (part of the ACES Viewing Transform)

Output Transform: As per ACES version 1.0 naming convention, this is the overall mapping from the standard scene-referred ACES colorimetry (SMPTE 2065-1 color space) to the output-referred colorimetry of a specific device or family of devices. It is always the concatenation of the Reference Rendering Transform (RRT) and a specific Output Device Transform (ODT), as defined below. For this reason the Output Transform is usually shortened in “RRT+ODT”.

Reference Rendering Transform (RRT): Converts the scene-referred colorimetry to display-referred, and resembles traditional film image rendering with an S-shaped curve. It has a larger gamut and dynamic range available to allow for rendering to any output device (even ones not yet in existence).

Output Device Transform (ODT): A guideline for rendering the large gamut and wide dynamic range of the RRT to a physically realized output device with limited gamut and dynamic range. There are many ODT’s, which will be likely generated by the manufacturers to the ACES guidelines.

Academy Viewing Transform: A combined reference of a LMT and an Output Transform, i.e. “LMT+RRT+ODT”.

Academy Printing Density (APD): A reference printing density defined by the AMPAS for calibrating film scanners and film recorders.

Academy Density Exchange (ADX): A densitometric encoding similar to Kodak’s Cineon used for capturing data from film scanners.

ACES color space SMPTE Standard 2065-1 (ACES2065-1): The principal scene-referred color space used in the ACES framework for storing images. Standardized by SMPTE as document ST2065-1. Its gamut includes the full CIE standard observer’s gamut with radiometrically linear transfer characteristics.

ACEScc (ACES color correction space): A color space definition that is slightly larger than the ITU Rec.2020 color space and logarithmic transfer characteristics for improved use within color correctors and grading tools.

ACEScct (ACES color correction space with toe): A color space definition that is slightly larger than the ITU Rec.2020 color space and logarithmically encoded for improved use within color correctors and grading tools that resembles the toe behavior of Cineon files.

ACEScg (ACES computer graphics space): A color space definition that is slightly larger than the ITU Rec.2020 color space and linearly encoded for improved use within computer graphics rendering and compositing tools.

ACESproxy (ACES proxy color space): A color space definition that is slightly larger than the ITU Rec.2020 color space, logarithmically encoded (like ACEScc, not like ACEScct) and represented with either 10-bits/channel or 12-bits/channel, integer-arithmetics digital representation. This encoding is exclusively designed for transport-only of codevalues across digital devices that don’t support floating-point arithmetics encodings, like SDI cables, monitors, and infrastructure in general.

ACES Color Spaces

ACES 1.0 defines a total of six color spaces covering the whole ACES framework as pertains generation, transport, processing, and storing of still and moving images. These color spaces all have a few common characteristics:

They are based on the RGB color-additive model.

Their codevalues are scene-referred, i.e. the numerical values represent some form of color-neutral numerical encoding of light (called “transfer characteristics”) as it is emitted and reflected by real scene objects. As a consequence of this: there is no theoretical upper bound to the codevalues (as there can always be an ideal, higher-energy emitter); the all-zero codevalue triple corresponds to the optical absence of light (dark body), though negative codevalues are possible as they correspond to tristimuli outside of the gamut primaries. Usually, scene-referred codevalues captured by a camera (over a predefined exposure time) relate are directly related to luminous exposure via the same transfer characteristics.

The reference illuminant (defining the codevalues of the whitepoint of a perfect diffuser) is chosen to be the CIE Standard D60, with chromaticities (0.32168,0.33767).

The six color spaces use RGB color primaries from an alternative of two sets called AP0 and AP1 respectively (“ACES Primaries” #0 and #1); more specifically their chromaticity coordinates follow the table below.

primaries AP0 Red AP0 Green AP0 Blue AP1 Red AP1 Green AP1 Blue

| primaries | AP0 Red | AP0 Green | AP0 Blue | AP1 Red | AP1 Green | AP1 Blue |

|---|---|---|---|---|---|---|

| x | 0.7347 | 0.0000 | 0.0001 | 0.713 | 0.165 | 0.128 |

| y | 0.2653 | 1.0000 | -0.0770 | 0.293 | 0.830 | 0.044 |

AP0 is defined as the smallest set of primaries that encloses the whole CIE 1964 standard-observer spectral locus; thus theoretically including, and exceeding, all the color stimuli that can be seen by the average human eye. The concept of using non-realizable or imaginary primaries is not new, and is often employed with color systems that wish to render a larger portion of the visible spectral locus. The ProPhoto RGB (developed by Kodak) and the ARRI Wide Gamut (developed by Arri) are two of such color spaces. Values outside the spectral locus are maintained with the assumption that they will later be manipulated through color timing or in other cases of image interchange to eventually lie within the locus. This results in color values not being “clipped” or “crushed” as a result of post-production manipulation.

AP1 is instead contained well within the CIE standard observer’s chromaticity diagram, yet still being considered “wide gamut”. It is conceived with primaries “bent” to be closer to those of display-referred color spaces (like sRGB) for mainly two reasons:

color-imaging and color-grading operations acting independently on the three RGB channels produce variations naturally-perceived on red, green, blue components. This might not be naturally perceived when operating on the “unbent” RGB axes of AP0 primaries.

all the codevalues contained in the [0,1] range represent colors that, converted into output-referred colorimetry via their respective Ouptut Transforms (read above), can be displayed with either present or future projection/display technologies.

ACES2065-1

This is the standard ACES color space; the only one based on AP0 RGB primaries and the only one intended, by design, for mid- and long-term storing into image/video files. It uses a photometrically linear transfer characteristics (i.e. more simply and improperly said to have ACES2065-1 codevalues are linear values scaled in an Input Transform so that:

a perfectly white diffuser would map to (1, 1, 1) RGB codevalue.

a photographic exposure of an 18% grey card would map to (0.18,0.18,0.18) RGB codevalue.

ACES2065-1 codevalues often exceed 1.0 for ordinary scenes, and a very high range of speculars and highlights can be maintained in the encoding. The internal processing and storage of ACES2065-1 codevalues must be in floating-point arithmetics with at least 16 bits per channel. Pre-release versions of ACES, i.e. those prior to 1.0, defined ACES2065-1 as the only color space. Legacy applications might therefore refer to ACES2065-1 when referring to “the ACES color space”. Furthermore, because of its importance and linear characteristics, and being the one based on AP0 primaries, it is also improperly referred to as either “Linear ACES”, “ACES.lin”, “SMPTE2065-1” or even “the AP0 color space”.

Standards are defined for storing images in the ACES2065-1 color space, particularly on the metadata side of things, so that applications honoring ACES framework can acknowledge the color space encoding from the metadata rather than inferring it from other things. For example:

SMPTE ST2065-4 defines the correct encoding of ACES2065-1 still images within OpenEXR files and file sequences and their mandatory metadata flags/fields.

SMPTE 2065-5 defines the correct embedding of ACES2065-1 video sequences within MXF files and their mandatory metadata fields.

ACEScg

ACEScc

Converting ACES2065-1 RGB values to CIE XYZ values

Converting CIE XYZ values to ACES2065-1 values

Source From Wikipedia