Automated planning and scheduling

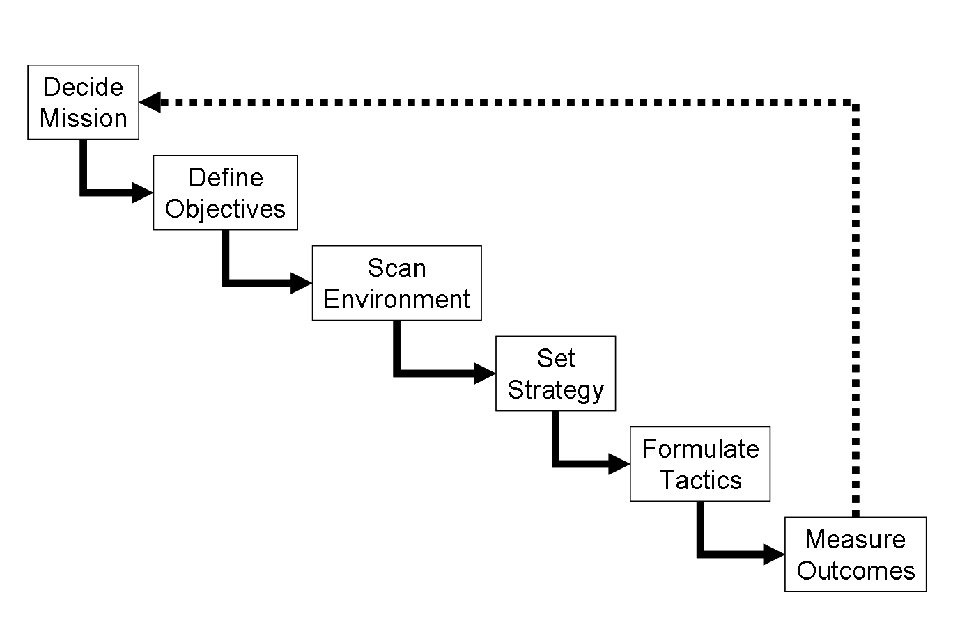

Automated planning and scheduling, sometimes denoted as simply AI Planning, is a branch of artificial intelligence that concerns the realization of strategies or action sequences, typically for execution by intelligent agents, autonomous robots and unmanned vehicles. Unlike classical control and classification problems, the solutions are complex and must be discovered and optimized in multidimensional space. Planning is also related to decision theory.

In known environments with available models, planning can be done offline. Solutions can be found and evaluated prior to execution. In dynamically unknown environments, the strategy often needs to be revised online. Models and policies must be adapted. Solutions usually resort to iterative trial and error processes commonly seen in artificial intelligence. These include dynamic programming, reinforcement learning and combinatorial optimization. Languages used to describe planning and scheduling are often called action languages.

Overview

Given a description of the possible initial states of the world, a description of the desired goals, and a description of a set of possible actions, the planning problem is to synthesise a plan that is guaranteed (when applied to any of the initial states) to generate a state which contains the desired goals (such a state is called a goal state).

The difficulty of planning is dependent on the simplifying assumptions employed. Several classes of planning problems can be identified depending on the properties the problems have in several dimensions.

Are the actions deterministic or nondeterministic? For nondeterministic actions, are the associated probabilities available?

Are the state variables discrete or continuous? If they are discrete, do they have only a finite number of possible values?

Can the current state be observed unambiguously? There can be full observability and partial observability.

How many initial states are there, finite or arbitrarily many?

Do actions have a duration?

Can several actions be taken concurrently, or is only one action possible at a time?

Is the objective of a plan to reach a designated goal state, or to maximize a reward function?

Is there only one agent or are there several agents? Are the agents cooperative or selfish? Do all of the agents construct their own plans separately, or are the plans constructed centrally for all agents?

The simplest possible planning problem, known as the Classical Planning Problem, is determined by:

a unique known initial state,

durationless actions,

deterministic actions,

which can be taken only one at a time,

and a single agent.

Since the initial state is known unambiguously, and all actions are deterministic, the state of the world after any sequence of actions can be accurately predicted, and the question of observability is irrelevant for classical planning.

Further, plans can be defined as sequences of actions, because it is always known in advance which actions will be needed.

With nondeterministic actions or other events outside the control of the agent, the possible executions form a tree, and plans have to determine the appropriate actions for every node of the tree.

Discrete-time Markov decision processes (MDP) are planning problems with:

durationless actions,

nondeterministic actions with probabilities,

full observability,

maximization of a reward function,

and a single agent.

When full observability is replaced by partial observability, planning corresponds to partially observable Markov decision process (POMDP).

If there are more than one agent, we have multi-agent planning, which is closely related to game theory.

Domain Independent Planning

In AI Planning, planners typically input a domain model (a description of a set of possible actions which model the domain) as well as the specific problem to be solved specified by the initial state and goal, in contrast to those in which there is no input domain specified. Such planners are called “Domain Independent” to emphasis the fact that they can solve planning problems from a wide range of domains. Typical examples of domains are block stacking, logistics, workflow management, and robot task planning. Hence a single domain independent planner can be used to solve planning problems in all these various domains. On the other hand, a route planner is typical of a domain specific planner.

Planning Domain Modelling Languages

The most commonly used languages for representing planning domains and specific planning problems, such as STRIPS and PDDL for Classical Planning, are based on state variables. Each possible state of the world is an assignment of values to the state variables, and actions determine how the values of the state variables change when that action is taken. Since a set of state variables induce a state space that has a size that is exponential in the set, planning, similarly to many other computational problems, suffers from the curse of dimensionality and the combinatorial explosion.

An alternative language for describing planning problems is that of hierarchical task networks, in which a set of tasks is given, and each task can be either realized by a primitive action or decomposed into a set of other tasks. This does not necessarily involve state variables, although in more realistic applications state variables simplify the description of task networks.

Algorithms for planning

Classical planning

forward chaining state space search, possibly enhanced with heuristics

backward chaining search, possibly enhanced by the use of state constraints (see STRIPS, graphplan)

partial-order planning

Classic planning

Traditional planning is based on two assumptions:

the determinism of actions. For example, the action “put a cube on the table” is deterministic. By executing it, we move from one state to another. On the contrary, “roll a dice” is non-deterministic because there are possible values. The “roll a dice” action does not fall within the scope of traditional planning.

the perfect observation. The agent (the robot, the program, etc.) knows the state of the world completely.

n classical planning, it is a question of looking for a sequence of actions (for example, “take a pan”, “put water”, “put pasta”, “light a fire”, “reach”, ” to put out the fire”). The A * algorithm is a typical example of a classical planning algorithm, often used in introductory courses for its simplicity. Here are some algorithmic techniques used in classical planners:

the forward search in a state space: heuristic search planning (HSP) algorithm, Fast-Forward algorithm (FF),

the back search in a state space,

the search before in a space of plans, graphplan

relaxation of a planning problem: calculating a relaxed problem for which it is easier to know if there is a plan that helps solve the initial problem

the transformation to a satisfiability problem of a formula of propositional logic.

Bylander demonstrated in 1994 that conventional planning is PSPACE-complete.

Reduction to other problems

reduction to the propositional satisfiability problem (satplan).

reduction to Model checking – both are essentially problems of traversing state spaces, and the classical planning problem corresponds to a subclass of model checking problems.

Temporal planning

Temporal planning can be solved with methods similar to classical planning. The main difference is, because of the possibility of several, temporally overlapping actions with a duration being taken concurrently, that the definition of a state has to include information about the current absolute time and how far the execution of each active action has proceeded. Further, in planning with rational or real time, the state space may be infinite, unlike in classical planning or planning with integer time. Temporal planning is closely related to scheduling problems. Temporal planning can also be understood in terms of timed automata.

Probabilistic planning

Probabilistic planning can be solved with iterative methods such as value iteration and policy iteration, when the state space is sufficiently small. With partial observability, probabilistic planning is similarly solved with iterative methods, but using a representation of the value functions defined for the space of beliefs instead of states.

Preference-based planning

In preference-based planning, the objective is not only to produce a plan but also to satisfy user-specified preferences. A difference to the more common reward-based planning, for example corresponding to MDPs, preferences don’t necessarily have a precise numerical value.

Conditional planning

Deterministic planning was introduced with the STRIPS planning system, which is a hierarchical planner. Action names are ordered in a sequence and this is a plan for the robot. Hierarchical planning can be compared with an automatic generated behavior tree. The disadvantage is, that a normal behavior tree is not so expressive like a computer program. That means, the notation of a behavior graph contains action commands, but no loops or if-then-statements. Conditional planning overcomes the bottleneck and introduces an elaborated notation which is similar to a control flow, known from other programming languages like Pascal. It is very similar to program synthesis, that means a planner generates sourcecode which can be executed by an interpreter.

An early example of a conditional planner is “Warplan-C” which was introduced in the mid 1970s. Until now, the question was not answered what the difference is between a normal sequence and a complicated plan, which contains if-then-statements. It has to do with uncertainty at runtime of a plan. The idea is, that a plan can react to sensor signals which are unknown for the planner. The planner generates two choices in advance. For example, if an object was detected, then action A is executed, if an object is missing, then action B is executed. A major advantage of conditional planning is the ability to handle partial plans. An agent is not forced to plan everything from start to finish but can divide the problem into chunks. This helps to reduce the state space and solves much more complex problems.

Deployment of planning systems

The Hubble Space Telescope uses a short-term system called SPSS and a long-term planning system called Spike.

Other types of planning

In practice, it only checks rarely assumptions of classical planning. This is why many extensions have emerged.

Conforming Planning

We talk about conformant planning (conforming schedule) where the system is in an uncertain state, but no observation is possible. The agent then has beliefs about the real world. These problems are solved by techniques similar to those of traditional planning, but where the state space is exponential in the size of the problem, because of uncertainty about the current status. A solution for a problem of conforming schedule is a sequence of actions.

Contingency planning

Contingency planning (contingent planning) in which the environment is observable by means of sensors, which can be faulty. For a contingent planning problem, a plan is no longer a sequence of actions but a decision tree because each stage of the plan is represented by a set of states rather than a single perfectly observable state as in the case of classical planning.

Rintanen demonstrated in 2004 that if branching is added (contingent planning), the planning problem becomes EXPTIME -complete. A particular case of contiguous planning is represented by the problems FOND – for “fully-observable and non-deterministic”. If the goal is specified in LTLf (linear temporal logic over finite trace) then the problem is always EXPTIME-complete 16 and 2EXPTIME-complete for a purpose specification in LDLf.

If, moreover, one adds uncertainty to the traditional planning (ie compliant planning), it becomes EXPSPACE -complete. If we add both branching and uncertainty (planning both compliant and contingent), it becomes 2EXPTIME-complete.

Probabilistic planning

Kushmerick et al. introduced probabilistic planning. In their work, the effect of actions is described with probabilists. He gives the example of the action `the robot takes a block ‘described in the following way: if the gripper of the robot is dry, then the robot holds the block with a probability 0.95; if the clamp is wet, then the robot holds the block with a probability of 0.5.

This leads to the problem of policy generation (or strategy) for a Markov Decision Process (MDP) or (in the general case) a Partially Observable Markov Decision Process (POMDP).

Nonlinear Planning

Classic planning resolves sub-goals in a given order. The problem is that it sometimes leads to destroying what has already been built. This phenomenon is known as the Sussman anomaly.

Suppose an individual barefoot should be in the same condition as he is wearing his right shoe, his left shoe, his right sock and his left sock. If he seeks to achieve the goals in the order of the utterance, he will fail.

To solve this type of problem, one can move to partially ordered plans in which the order between the actions is fixed only when it is necessary (commitment at the latest or least commitment planning).

In the previous example, put the left shoe should be done after putting the sock left. Same for the right. On the other hand, the execution of the plan for the left is independent of the execution for the right. The overall plan is therefore partially ordered.

Planners able to handle this category of problem are said to be partial order (POP, NOAH, etc.).

Hierarchical planning

Generally, when planning, one can have a hierarchical information on the actions, that is to say a description on how complex actions are decomposed. For example, a complex action like “serving a coffee” can be broken down into the sequence of two complex actions “making a coffee” and then “bringing coffee”. Thus, there are planners, like ABSTRIPS, who in addition to the description of the actions, take as input the hierarchical description of the actions. One can for example begin to plan at a high level then go down in the detail if necessary (as does ABSTRIPS for example). The objective is then described using a hierarchical task network (HTN).

Temporal planning

Time planning allows you to express action times, conditions at the beginning, end and during the action, and effects at the beginning and at the end of the actions. PDDL 2.1 includes time planning. The method TLP-GP 1 constructs a graph and then solves temporal constraints using a SMT solver. One backtracke in case of inconsistency. Rintanen has demonstrated that concurrent time planning (multiple instances of the same parallel action is possible) is EXPSPACE-complete. On the other hand, if we relax these conditions, we can reduce ourselves to conventional planning and therefore the temporal planning with these restrictions becomes PSPACE-complete.

General planning

General planning consists of producing a plan that works for several starting environments.

Multi-Agent Planning

Multi-agent scheduling studies the completion of a task by multiple agents, for example, multiple robots. The generation of the plan and its execution is then often distributed / decentralized. An important aspect in the plans is the cooperation between agents. There is also a centralized scheduling algorithm, which relies on a generalization of STRIPS to the multi-agent framework, called MA-STRIPS. The algorithm was then distributed rendering, using a solver CSP distributed to manage the coordination between agents. The authors, Nissim, Brafman, and Domshlak, have experimentally verified that their algorithm is better than centralized scheduling when agents have little interaction with each other.

A major difficulty in multi-agent planning is the combinatorial explosion of all actions, which is exponential in the number of agents. In 2011, Jonsson and Rovatsos offers a solution to counteract this problem reduces to the classic single-agent planning. There is also a renewed use parallelization techniques for planning algorithms to scale.

Epistemic planning

The epistemic planning problem is to take into account the knowledge of agents in the description of the states and actions. Typically, the goal is a formula of the epistemic modal logic, such as “the agent knows that the agent b that the block C is on the block A” and the actions are represented with models of actions of the logic dynamic epistemic. The planning problem is undecidable in all its generality.

Objective :

Above (A, B) Λ Above (B, C)

Initial state :

[Above (A, Table) Λ Above (B, Table) Λ Above (C, A) Λ Lock (A) Λ Lock (B) Λ Lock (C) Λ Disclosure (B) Λ Disclosure (C)]

Available actions:

Move (b, x, y)

PRE-CONDITIONS: Above (b, x) Λ Uncovered (b) Λ Uncovered (y) Λ Block (b) Λ Block (y) Λ (b ≠ x) Λ (b ≠ y) Λ (x ≠ y)

ADDITIONAL: Above (b, y) Λ Discovered (x)

CANCELLATIONS: Above (b, x) Λ Discovered (y)

Move_tool (b, x)

PRECONDITIONS: Above (b, x) Λ Uncovered (b) Λ Uncovered (y) Λ Block (b) Λ (b ≠ x)

ADDITIONAL: Above (b, Table) Λ Discovered (x)

CANCELLATIONS: Above (b, x)

Example

Informal example of planning.

Target

have some fruit and vegetables in the fridge

Initial state

[empty fridge, at home, in slippers]

Available actions (unordered list)

go out home,

wear shoes,

take car keys,

reach the store by car,

reach the shop on foot,

go back home,

place purchases in the fridge,

enter the store,

take fruit,

take vegetables,

to pay.

Possible solution (ordered list)

[1- wear shoes, 2- take car keys, 3- get out of the house, 4- get to the store by car, 5- get into the store, 6- take fruit, 7- take vegetables, 8- pay, 9- go back to house, 10- place purchases in the fridge]

Another possible solution (ordered list):

[1- wear shoes, 2- go out, 3- get to the store by foot, 4- get into the store, 5- take vegetables, 6- take fruit, 7- pay, 8- go home, 9- place purchases in the fridge]

Conclusions

From the example we can see how:

the solutions can be multiple,

may be present actions that must be performed, inevitably, after others (I can not reach the store by car if I did not take the keys before),

there may exist actions that are reversible (take fruits and vegetables),

it is not necessary that the final plan contains all the actions in case of multiple actions or sequences of interchangeable actions (reach the shop on foot or by car).

Source from Wikipedia